Solana Historical Data: How to Access and Analyze Past On-Chain Activity (2026)

Tools Mentioned in This Article

Compare features, read reviews, and check live health scores on MadeOnSol.

Compare features, read reviews, and check live health scores on MadeOnSol.

Learn how to build a Solana token analytics dashboard that combines real-time price feeds, holder data, trading volume, and liquidity metrics into a single view using TypeScript and the MadeOnSol API.

Learn how to set up Solana webhooks for real-time blockchain alerts. Compare Helius, QuickNode, Shyft, and Triton webhook providers, with TypeScript code examples for wallet monitoring, NFT sales alerts, and payment confirmation.

Learn how to track Solana program activity by monitoring program IDs, decoding instructions with Anchor IDLs, parsing custom events, and following CPI chains. Covers real use cases like DEX monitoring and pool creation detection.

Build with MadeOnSol API

KOL tracking, deployer intelligence, DEX streaming, and webhooks. Free API key — 200 requests/day.

import { MadeOnSol } from "madeonsol";

// Get a free API key at madeonsol.com/developer

const client = new MadeOnSol({ apiKey: "msk_your_key" });

const { trades } = await client.kol.feed({ limit: 10 });

const { alerts } = await client.deployer.alerts({ limit: 5 });

const { tools } = await client.tools.search({ q: "trading" });Get weekly Solana ecosystem insights delivered to your inbox.

Solana processes thousands of transactions per second and has accumulated billions of records since its genesis block in 2020. Yet accessing that historical data is surprisingly difficult. Unlike Ethereum, where archive nodes are a well-trodden path, Solana's architecture creates a gap that trips up developers, analysts, and researchers regularly.

Whether you are a researcher tracing token flows from 2023, a developer building a portfolio tracker with full history, or an analyst constructing protocol-level dashboards, you need access to data that standard Solana RPC endpoints no longer serve. The good news: several mature platforms now make this data accessible through SQL, APIs, and browser-based tools.

This guide explains why the gap exists, what your options are for bridging it, and how to run practical queries against Solana historical data today.

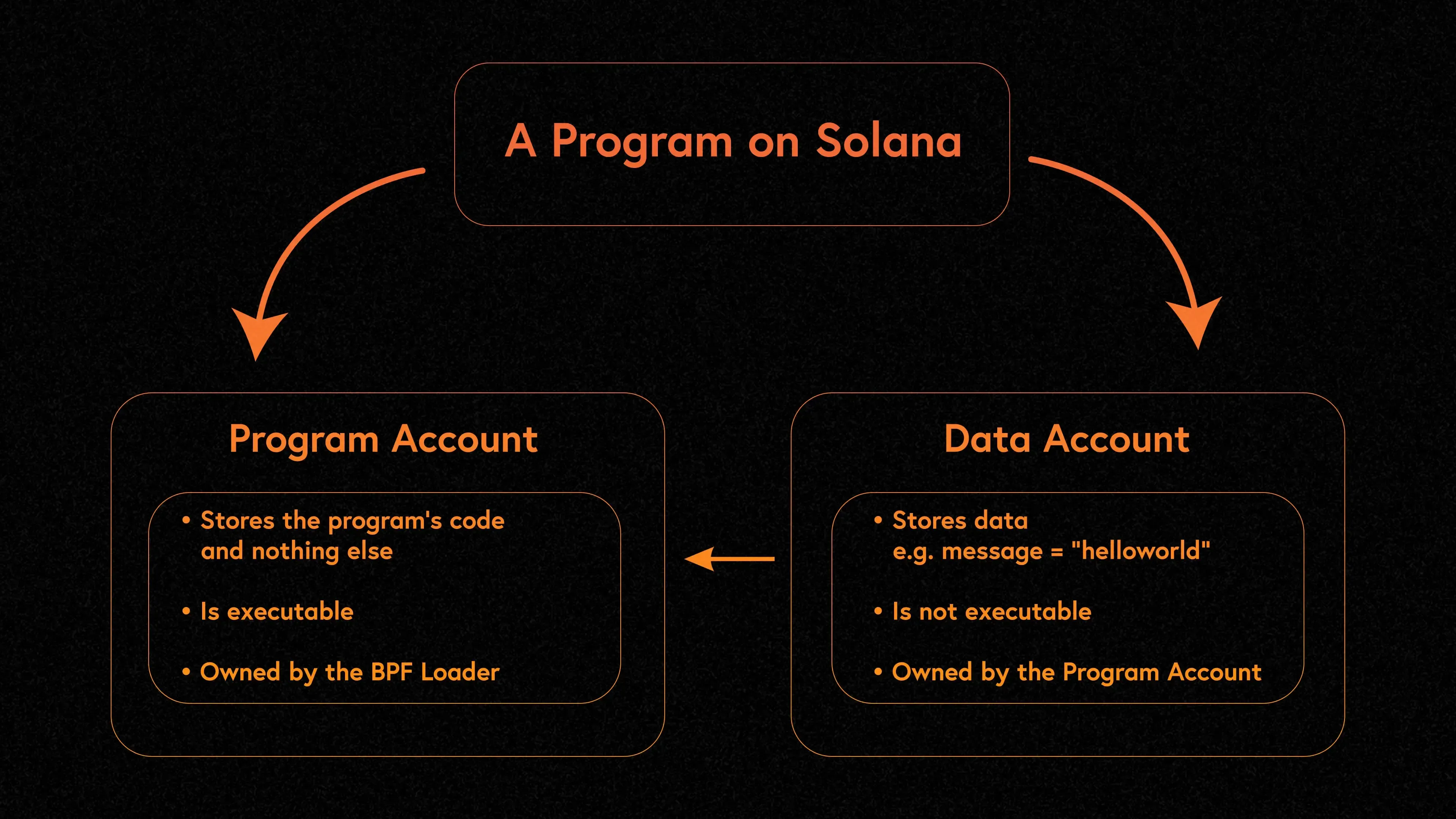

Standard Solana validators only retain roughly two days of transaction history. After that, ledger data is pruned to keep storage requirements manageable -- a necessary tradeoff given Solana's throughput. Even dedicated RPC providers typically retain only a few epochs of recent data through their standard endpoints.

This means that if you try to call getTransaction or getBlock on a transaction from six months ago through a normal RPC node, you will get a null response. The data simply is not there.

Some providers offer "archival" RPC access that retains full ledger history, but these services come at a significant cost premium. Storage requirements for a full Solana archive node exceed 1 PB and grow rapidly, which is why most providers charge separately for historical access.

The problem becomes more concrete when you consider common use cases. A portfolio tracker that needs to display a user's full transaction history cannot rely on RPC alone. A compliance tool that must trace token provenance back to minting cannot query pruned data. An analytics dashboard tracking protocol growth over quarters needs access to months of historical records that no standard RPC node retains.

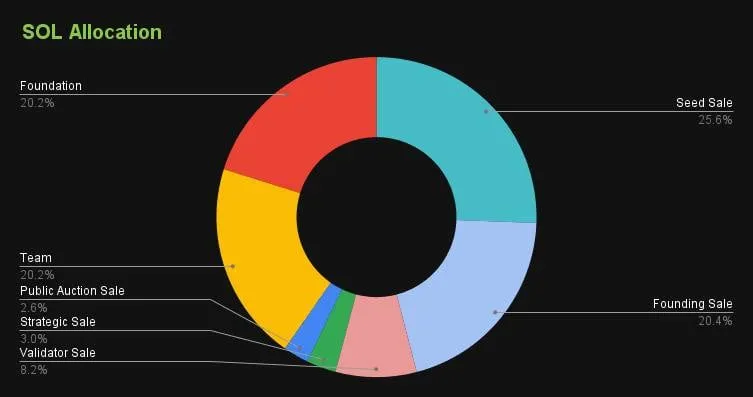

This is not a bug in Solana's design. It is an intentional tradeoff that keeps validator hardware costs low enough to maintain a large, decentralized validator set. The ecosystem has responded by building specialized data infrastructure to fill the gap.

Understanding this limitation is the first step to choosing the right tool for your historical data needs.

Google hosts a public Solana dataset on BigQuery that contains parsed transaction data going back to genesis. This is one of the most complete and accessible sources of Solana historical data available.

The dataset includes tables for blocks, transactions, token transfers, and account activity. The BigQuery dataset is maintained by the Solana Foundation in partnership with Google. It receives new data through an automated pipeline that processes finalized blocks and writes them to BigQuery tables. The main tables you will work with include:

You can query it using standard SQL:

-- Find all transactions involving a specific wallet in January 2025

SELECT

block_timestamp,

signature,

fee

FROM `bigquery-public-data.crypto_solana_mainnet_us.transactions`

WHERE

ARRAY_TO_STRING(account_keys, ',') LIKE '%YourWalletAddress%'

AND block_timestamp BETWEEN '2025-01-01' AND '2025-01-31'

ORDER BY block_timestamp DESC

LIMIT 100;

BigQuery charges per query based on data scanned, so filtering by date range and specific columns keeps costs low. The free tier (1 TB/month of queries) is enough for most research tasks.

Limitations: the public dataset can lag behind the chain by a few hours, and the schema does not always parse every instruction type cleanly. For recent data (last 30 days), a standard RPC or API is usually faster and cheaper.

Flipside provides curated, decoded Solana tables through its free analytics platform. Unlike raw BigQuery data, Flipside's tables are pre-parsed and labeled -- you get human-readable program names, decoded instruction types, and token metadata joined in automatically.

Flipside is particularly strong for DeFi and NFT historical analysis. A typical query might look like:

-- Daily swap volume on Jupiter over the past year

SELECT

DATE_TRUNC('day', block_timestamp) AS day,

COUNT(*) AS swap_count,

SUM(swap_amount_usd) AS volume_usd

FROM solana.defi.fact_swaps

WHERE swap_program = 'Jupiter'

AND block_timestamp >= DATEADD('year', -1, CURRENT_DATE)

GROUP BY day

ORDER BY day;

The platform also supports joining Solana data with cross-chain tables, which is useful if you need to compare activity on Solana with Ethereum, Arbitrum, or other networks that Flipside indexes.

Flipside gives you a built-in charting tool, so you can visualize results without exporting. The free tier includes generous compute credits, and results are cached for fast iteration.

For a deeper look at on-chain analysis workflows, see our guide on Solana on-chain data analysis.

Dune Analytics takes a similar SQL approach but emphasizes shareable dashboards and community-built queries. Thousands of existing Solana dashboards cover everything from DEX volumes to validator performance to token holder distributions over time.

Dune's Solana tables use a DuneSQL engine and include decoded program data for major protocols. If someone has already built the analysis you need, you can fork their query and modify it:

-- Historical SOL staking ratio over time

SELECT

DATE_TRUNC('week', block_time) AS week,

SUM(sol_staked) / SUM(total_supply) AS staking_ratio

FROM solana.staking_summary

WHERE block_time >= DATE '2024-01-01'

GROUP BY week

ORDER BY week;

Many Solana project teams maintain official Dune dashboards with verified queries, making them a reliable starting point for protocol-specific research.

Dune is strongest when you want to publish findings or track metrics over time with auto-refreshing dashboards. The free tier has query execution limits, but paid plans offer priority execution and private queries.

For comparisons between analytics platforms, check our roundup of the best Solana analytics dashboards.

Helius approaches historical data differently. Rather than a SQL warehouse, it offers API endpoints that return parsed, enriched transaction histories for specific accounts.

The enhanced transaction history endpoints let you pull complete activity for a wallet or token account:

// Fetch parsed transaction history for a wallet via Helius

const response = await fetch(

`https://api.helius.xyz/v0/addresses/${walletAddress}/transactions?api-key=${apiKey}&type=SWAP`

);

const transactions = await response.json();

// Each transaction includes parsed data:

// { description, type, source, fee, timestamp, nativeTransfers, tokenTransfers }

Helius parses raw transaction data into structured events -- swaps, transfers, NFT sales, staking actions -- with human-readable descriptions. This is invaluable when you need account-level history without writing SQL.

For developers building applications rather than running ad-hoc analysis, Helius is often the most practical choice. You do not need to learn SQL or manage warehouse credentials. The API returns JSON responses that map directly to your application's data models, and the parsed transaction format eliminates the need to decode raw Solana instruction data yourself.

The DAS API also provides historical ownership and metadata for NFTs and compressed tokens, which is difficult to reconstruct from raw ledger data alone.

For ad-hoc lookups rather than programmatic analysis, block explorers remain the simplest way to inspect Solana historical data. Solscan maintains a complete indexed copy of the Solana ledger and lets you search any transaction by signature, block by slot number, or account by address -- regardless of age.

When investigating suspicious activity or verifying a specific historical transaction, Solscan is usually the fastest path. You can paste a transaction signature directly into the search bar and get a fully decoded view of the transaction, including inner instructions, token balance changes, and program logs. For older transactions that may involve deprecated programs, Solscan still renders available metadata even when full decoding is not possible.

Solana Beach also provides archival browsing with a focus on validator and epoch-level data. These tools are best for one-off investigations rather than bulk analysis.

For bulk data needs, the Solana Foundation maintains a public archive of ledger snapshots on Google Cloud Storage. These compressed archives can be replayed to reconstruct state at any historical point, though the process requires significant compute resources and Solana CLI expertise.

For a broader look at indexing options, see our guide on the best Solana data indexers.

Constructing a historical price chart for a Solana token requires combining DEX trade data over time. Here is how you would approach it on Flipside:

-- Daily OHLC prices for a token from DEX trades

SELECT

DATE_TRUNC('day', block_timestamp) AS day,

MIN(price_usd) AS low,

MAX(price_usd) AS high,

FIRST_VALUE(price_usd) OVER (

PARTITION BY DATE_TRUNC('day', block_timestamp)

ORDER BY block_timestamp ASC

) AS open,

LAST_VALUE(price_usd) OVER (

PARTITION BY DATE_TRUNC('day', block_timestamp)

ORDER BY block_timestamp ASC

ROWS BETWEEN UNBOUNDED PRECEDING AND UNBOUNDED FOLLOWING

) AS close,

SUM(amount_usd) AS volume

FROM solana.defi.fact_swaps

WHERE token_address = 'YourTokenMintAddress'

AND block_timestamp >= '2025-01-01'

GROUP BY day

ORDER BY day;

You can extend this approach to build multi-token comparison charts, compute rolling averages, or detect volume anomalies. The key advantage of using on-chain DEX data rather than centralized exchange feeds is verifiability -- every price point maps to an actual swap transaction on Solana.

This query gives you daily candlestick data derived entirely from on-chain swaps. For tokens that only trade on Solana DEXes, this is often the most reliable historical price source -- centralized exchange APIs may not have the data at all.

Tracking how a wallet's behavior changes over months or years can reveal patterns -- accumulation phases, protocol usage shifts, or activity spikes around market events.

Using Dune, you can build a weekly activity profile:

-- Weekly transaction count and unique programs used by a wallet

SELECT

DATE_TRUNC('week', block_time) AS week,

COUNT(*) AS tx_count,

COUNT(DISTINCT executing_program) AS unique_programs,

SUM(fee_sol) AS total_fees_sol

FROM solana.transactions

WHERE signer = 'YourWalletAddress'

AND block_time >= DATE '2024-06-01'

GROUP BY week

ORDER BY week;

Pairing this with token transfer data lets you build a complete financial profile: when did the wallet start accumulating a particular token, how frequently does it interact with specific DeFi protocols, and did activity spike around known market events. This level of analysis is standard practice in on-chain research and due diligence workflows.

This kind of longitudinal analysis is impossible with standard RPC because the data simply does not exist on the node anymore. Warehouse platforms solve this by indexing and retaining everything.

| Use case | Best option | Why |

|---|---|---|

| One-off transaction lookup | Solscan, Solana Beach | Instant, no setup |

| Wallet transaction history (API) | Helius | Parsed, structured, account-scoped |

| DeFi protocol analytics | Flipside, Dune | Pre-decoded tables, SQL, charts |

| Bulk research / custom models | BigQuery | Raw data, full history, standard SQL |

| NFT provenance / ownership | Helius DAS API | Purpose-built for asset history |

| Dashboard publishing | Dune | Community, sharing, auto-refresh |

The right choice depends on whether you need raw data or parsed data, one-time queries or recurring dashboards, and how far back you need to go.

Filter aggressively. Solana's volume means even a single day of data can contain hundreds of millions of transactions. Always scope queries by date range, program, or account to avoid scanning terabytes unnecessarily.

Cache results locally. If you are building an application that displays historical data, query the warehouse once and store results in your own database. Repeated warehouse queries for the same data waste credits and add latency.

Combine sources. Use Helius for real-time and recent data, then switch to Flipside or BigQuery for anything older than 30 days. This hybrid approach gives you both speed and depth.

Validate data quality. Different providers may parse the same transaction differently, especially for complex DeFi interactions involving multiple inner instructions. When accuracy matters, cross-reference results from at least two sources before drawing conclusions.

Watch for schema changes. Solana programs upgrade frequently, and warehouse providers update their decoded tables accordingly. Pin your queries to specific table versions or test regularly to catch breaking changes.

The Solana ecosystem continues to improve its data infrastructure. Projects like Yellowstone gRPC and the Geyser plugin framework are making it easier for indexers to capture real-time data feeds, which in turn improves the completeness and latency of warehouse platforms. As Solana adoption grows and more institutional participants require audit-grade historical records, expect archival solutions to become more competitive and accessible.

For now, the combination of warehouse platforms for deep history and API providers for recent data covers the vast majority of use cases. Start with the free tiers, validate your approach, and scale to paid plans only when your query volume or freshness requirements demand it.

The Solana mainnet genesis block was created on March 16, 2020. BigQuery and Flipside both have data going back to genesis, though the earliest months have relatively low transaction volume. Helius and RPC-based solutions typically cover the full history for account-level queries, but may not serve raw block data from the earliest slots. For complete archival access to raw ledger data, the Solana Foundation's Google Cloud Storage snapshots are the most comprehensive source.

Technically yes, but it is impractical for most teams. A full Solana archive requires over 1 PB of storage and grows by several terabytes per week. The hardware cost alone exceeds most budgets, and maintaining the node requires Solana validator operations expertise. For nearly all use cases, BigQuery, Flipside, or a paid archival RPC plan from a provider like Helius is far more cost-effective than running your own infrastructure.

Several free options exist. Google BigQuery offers 1 TB of free queries per month against the public Solana dataset. Flipside provides free compute credits for SQL queries. Dune has a free tier with execution limits. Solscan and Solana Beach are free for manual browsing. For API-level access, Helius offers a free tier with rate limits. Heavy production usage on any platform will eventually require a paid plan, but research and development can typically stay within free tiers.

Solana's design prioritizes throughput and low latency over storage. At thousands of transactions per second, retaining full history on every validator would require enormous and ever-growing storage, increasing the cost to run a validator and potentially reducing network decentralization. Instead, Solana validators prune old ledger data after approximately two epochs (roughly two days) and rely on external archival services to preserve the full history. This is a deliberate architectural tradeoff that keeps validator hardware requirements accessible.